[ad_1]

Previous December, Sébastien Stormacq wrote about the availability of a distributed map state for AWS Step Capabilities, a new function that will allow you to orchestrate large-scale parallel workloads in the cloud. That’s when Charles Burton, a details units engineer for a company referred to as CyberGRX, identified out about it and refactored his workflow, reducing the processing time for his machine learning (ML) processing task from 8 times to 56 minutes. In advance of, jogging the career essential an engineer to frequently watch it now, it operates in much less than an hour with no guidance essential. In addition, the new implementation with AWS Step Capabilities Distributed Map expenses less than what it did at first.

What CyberGRX accomplished with this resolution is a great illustration of what serverless systems embrace: letting the cloud do as a great deal of the undifferentiated major lifting as probable so the engineers and data researchers have a lot more time to target on what is essential for the enterprise. In this situation, that suggests continuing to improve the product and the procedures for a single of the vital choices from CyberGRX, a cyber threat evaluation of third get-togethers working with ML insights from its substantial and increasing database.

What is the business enterprise obstacle?

CyberGRX shares third-occasion cyber threat (TPCRM) info with their buyers. They predict, with high assurance, how a third-social gathering corporation will reply to a hazard assessment questionnaire. To do this, they have to run their predictive model on just about every organization in their platform they presently have predictive info on far more than 225,000 firms. Every time there’s a new organization or the facts improvements for a enterprise, they regenerate their predictive product by processing their complete dataset. Above time, CyberGRX details experts strengthen the model or add new functions to it, which also requires the model to be regenerated.

The obstacle is running this position for 225,000 providers in a timely way, with as number of fingers-on sources as probable. The occupation operates a established of operations for just about every enterprise, and each individual firm calculation is unbiased of other corporations. This implies that in the suitable situation, each and every enterprise can be processed at the similar time. On the other hand, applying this sort of a significant parallelization is a challenging dilemma to clear up.

To start with iteration

With that in thoughts, the corporation built their initial iteration of the pipeline employing Kubernetes and Argo Workflows, an open up-resource container-indigenous workflow motor for orchestrating parallel work opportunities on Kubernetes. These had been resources they were familiar with, as they have been currently working with them in their infrastructure.

But as soon as they tried to operate the career for all the organizations on the system, they ran up towards the restrictions of what their system could manage effectively. For the reason that the answer depended on a centralized controller, Argo Workflows, it was not strong, and the controller was scaled to its highest ability throughout this time. At that time, they only experienced 150,000 businesses. And functioning the occupation with all of the companies took all over 8 days, for the duration of which the process would crash and need to have to be restarted. It was pretty labor intense, and it constantly demanded an engineer on call to watch and troubleshoot the position.

The tipping stage arrived when Charles joined the Analytics workforce at the beginning of 2022. One of his very first duties was to do a complete design operate on somewhere around 170,000 organizations at that time. The product run lasted the total 7 days and ended at 2:00 AM on a Sunday. That is when he made a decision their process desired to evolve.

Second iteration

With the ache of the last time he ran the product contemporary in his head, Charles thought by how he could rewrite the workflow. His initially imagined was to use AWS Lambda and SQS, but he recognized that he wanted an orchestrator in that answer. Which is why he chose Step Functions, a serverless support that will help you automate processes, orchestrate microservices, and produce facts and ML pipelines moreover, it scales as required.

Charles bought the new version of the workflow with Phase Capabilities functioning in about 2 months. The initially move he took was adapting his current Docker graphic to run in Lambda applying Lambda’s container impression packaging structure. For the reason that the container previously labored for his details processing responsibilities, this update was basic. He scheduled Lambda provisioned concurrency to make absolutely sure that all capabilities he necessary had been prepared when he commenced the work. He also configured reserved concurrency to make guaranteed that Lambda would be ready to deal with this highest variety of concurrent executions at a time. In order to guidance so quite a few functions executing at the exact same time, he lifted the concurrent execution quota for Lambda for every account.

And to make certain that the steps had been operate in parallel, he employed Phase Features and the map condition. The map point out permitted Charles to run a set of workflow techniques for every product in a dataset. The iterations operate in parallel. For the reason that Step Functions map state delivers 40 concurrent executions and CyberGRX desired a lot more parallelization, they produced a answer that released numerous state machines in parallel in this way, they were in a position to iterate quick across all the organizations. Making this intricate option, expected a preprocessor that handled the heuristics of the concurrency of the technique and break up the enter information across several condition equipment.

This 2nd iteration was by now far better than the first just one, as now it was ready to complete the execution with no difficulties, and it could iterate above 200,000 organizations in 90 minutes. Nonetheless, the preprocessor was a very advanced part of the process, and it was hitting the limitations of the Lambda and Stage Capabilities APIs due to the amount of parallelization.

Third and closing iteration

Then, for the duration of AWS re:Invent 2022, AWS declared a dispersed map for Phase Features, a new kind of map state that permits you to create Stage Features to coordinate large-scale parallel workloads. Applying this new function, you can conveniently iterate above millions of objects saved in Amazon Very simple Storage Assistance (Amazon S3), and then the distributed map can start up to 10,000 parallel sub-workflows to process the details.

When Charles read through in the Information Blog site short article about the 10,000 parallel workflow executions, he promptly imagined about striving this new condition. In a few of months, Charles crafted the new iteration of the workflow.

Due to the fact the distributed map condition split the enter into distinct processors and handled the concurrency of the distinctive executions, Charles was equipped to fall the sophisticated preprocessor code.

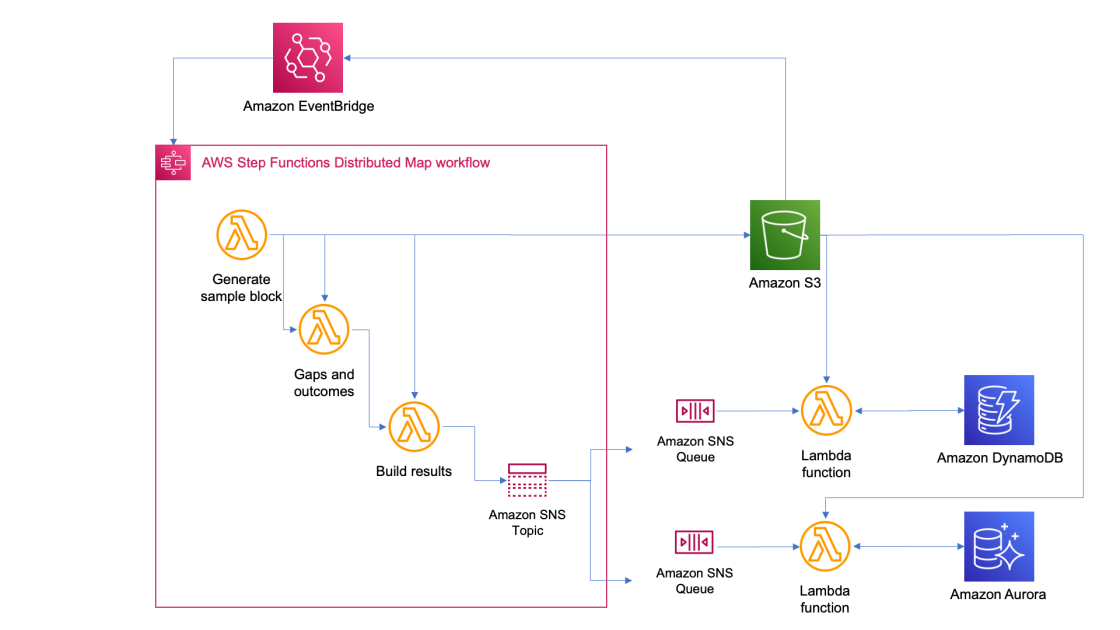

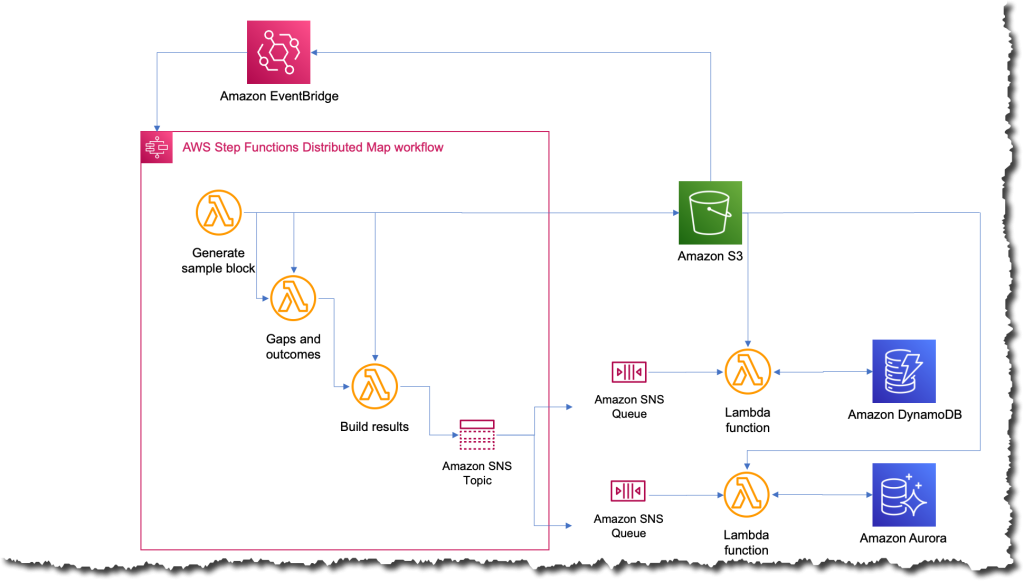

The new procedure was the most basic that it is at any time been now when they want to run the task, they just add a file to Amazon S3 with the input facts. This motion triggers an Amazon EventBridge rule that targets the point out machine with the distributed map. The condition machine then executes with that file as an input and publishes the final results to an Amazon Basic Notification Service (Amazon SNS) topic.

What was the influence?

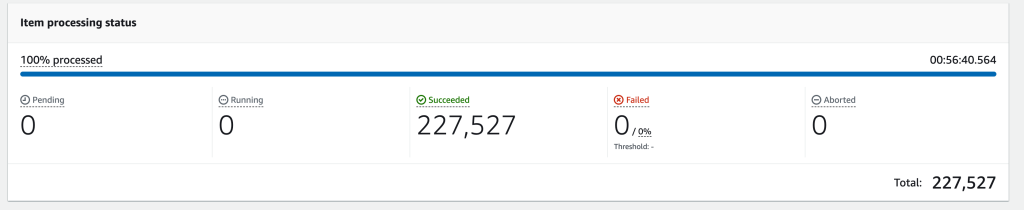

A couple months following completing the third iteration, they experienced to operate the career on all 227,000 businesses in their system. When the task completed, Charles’ group was blown absent the whole course of action took only 56 minutes to full. They approximated that throughout those people 56 minutes, the occupation ran a lot more than 57 billion calculations.

The subsequent picture exhibits an Amazon CloudWatch graph of the concurrent executions for 1 Lambda operate during the time that the workflow was operating. There are just about 10,000 capabilities operating in parallel through this time.

Simplifying and shortening the time to operate the job opens a large amount of options for CyberGRX and the information science staff. The advantages started out appropriate away the instant one particular of the data scientists desired to operate the work to take a look at some improvements they had manufactured for the product. They had been in a position to operate it independently with out necessitating an engineer to assist them.

And, since the predictive model alone is a single of the critical choices from CyberGRX, the business now has a additional aggressive solution considering the fact that the predictive assessment can be refined on a every day basis.

Study extra about working with AWS Action Functions:

You can also test the Serverless Workflows Assortment that we have offered in Serverless Land for you to examination and learn extra about this new capability.

— Marcia

[ad_2]

Resource backlink